Da privacy, the new standard?

It has been said that President Trump was nowhere to be found at the time of the handover of power, yet tracking him would never have been so simple. Indeed, the NYT revealed that thanks to the deanonymized location data of a secret agent in charge of the president’s protection, they were able to track the whereabouts of Mr. Trump. The collection of information was done without the knowledge of the device holders. We are far from data privacy…This example illustrates the lack of control over data by consumers and the cruel lack of understanding about the use of stored data, which can be simply hacked on top of being extremely energy consuming.

Data privacy becomes a sovereignty issue: the TikTok affair, a company accused by the USA of monitoring its population on behalf of Beijing, illustrates the zone of opacity around the data collected by these technological mastodons which are becoming as powerful as states. From then on, the dismantling of these companies becomes almost legitimate and invites us to rethink the Sherman Act (1890) when Standard Oil controlled 90% of oil refining and distribution in the United States. It is therefore necessary to heal but arbitration is difficult to achieve as each state wishes to preserve its own interests.

In all cases, whether provoked by the economic logics of the GAFAM or thought within a framework of governmental paternalism, the collection of personal data is a major issue as it shapes the thinking and behaviour of millions of individuals.

Which leads us to wonder: are the questions of “data privacy” questions of “freedom privation”? Therefore, doesn’t the word “private” associated with data become contradictory?

Personalization is based on the intimate knowledge of the user and therefore of his private life.

To get out of this privatization of thought, or should we say, this penetration of data into the intimate space, a new trend is emerging: Data Privacy.

Data Privacy at a glance…

The notion of Data Privacy encompasses both a philosophical and a regulatory approach. The former claims that people wish to be treated as such, not as merchandise, thanks to a more transparent and respectful collection, storing, management and sharing of data with third parties. While the regulatory approach refers to the compliance with the privacy laws to be applied.

> 3 key elements

What accounts for the current growing concerns about the loss of control on personal data?

1. Enough is enough

Privacy concerns have become acute as companies, governments and other organizations collect more customer data. For most companies, data helps them to better understand their customers, so that they can provide customized service and distinguish themselves from competitors. However, some firms have been selling consumer data to third parties, prompting regulators to intervene. Last January, WhatsApp updated its privacy policy to indicate that certain user data will now be shared with its parent company, Facebook. Faced with such an outcry, the decision has been postponed to May 15th 2021. In the meantime, competing messaging applications offering end-to-end encryption have since experienced record downloads. Indeed, according to Sensor Tower, Signal was installed approximately 7.5 million times from the App Store and the Google Play Store between January 6th and 10th.

Awareness of the danger of the data targeted collected also comes from the experience of authoritarian states. George Orwell is the essential literary reference, as well as his new cinematic counterpart, Black Mirror. China’s march towards a dystopia where the state collects data in bulk and uses it is an example of this. But this reality is not limited to authoritarian systems. The laws may be different, but often the tools are very similar. This is even more obvious when comparing China’s rapidly growing social credit system with the decades-old credit rating system that is particularly prevalent in the United States. The latter is constantly being refined through the introduction of new algorithms. In China, hundreds of thousands of people are being denied reservations for high-speed train, air and other tickets because they have received a poor social credit rating. They have few ways to correct their scores. In the U.S., if you don’t pay your car insurance premiums, the insurance company sometimes has the option of remotely turning off the engine at a time of its choosing: the penalty is quite severe, but at least it is directly related to the non-payment.

2. A growing reluctance against being at the service of attention economy

The term “attention economy” was coined by psychologist, economist, and Nobel Laureate Herbert A. Simon, who posited that attention was the “bottleneck of human thought” that limits both what we can perceive in stimulating environments and what we can do. He also added that the constant and exponential flow of information promotes a poverty of attention. Many firms understand the scarcity of our attention, and are adapting their business models to capitalize on it. For instance, music streaming services like Spotify have two revenue streams; you can either monetarily pay for ads to disappear (then the price of privacy is very clear: 7€/month), or pay with your attention and listen to ads.

Two conflicting trends are facing: companies accumulate private data which they wish to protect; at the same time, they are pushed to share these private data to enhance collaboration and personalization. Finally, in a world where nothing is free, the counterpart to the free content offered on the Internet is economic. When it is free, you are the product…. Trivially speaking, what remunerates on the internet is the monopolisation of the 2 precious seconds of the user’s attention. By monetizing and commodifying attention, the new world order has partially sold away its ability to see problems and enact collective solutions.

This leads to a polarization within archipelagos with a binary system of thought. The fact that the collection of personal data ends up shaping opinions and choices is not just a mechanism “suffered”, a collateral damage of the economic greed of the Internet giants. The existence of such cognitive biases (Homo Economicus, by definition “rational”, no longer exists.) has, in the last decade, been increasingly exploited by politicians and regulators themselves to guide the decisions of their citizens, for their own good or that of society. Indeed, we have seen Thaler win the Nobel Prize in Economics for his work on behavioral economics and nudge theory in 2017, and “nudge for public policies” entities flourish in decision-making spheres everywhere. A very concrete example is the appearance of dark patterns which are user interfaces designed in such a way as to subvert or hinder the autonomy, decision-making or choice of users. Instagram’s latest update emphasizes this phenomenon: By swapping tabs, Instagram attracts in its shop people who reflexively press the icon at the bottom right to view their “notifications”, and sends to reels (the new video format that mimics TikTok) people who simply wanted to share a new photo. On the surface, it may not seem like much, but these two touch-ups are crucial for the brand.

Data privacy aims at regulating this hyper-personalization by giving back autonomy and trust to users.

3. Ecology: the fight of the century

A contextual element that also emerges is the global environmental awareness. The environmental impact linked to emerging technologies (IoT, Cloud, smartphones or AI) have caused the explosion in the volume of data generated by humanity. To give you an idea, by 2030, data centers around the world could be swallowing up 10% of the world’s electricity production compared to 3% at present. Today, data centers can consume more energy than a mid-size US city and their development is exponential. More specifically, an actor such as Google stores the equivalent of 23,000 sheets of paper (2m36 high for info) of personal data for an individual, and this in… 2 weeks.

→ Small tips: Regularly clean your mailbox and unsubscribe from mailing lists that no longer interest you. A small step for man, a big step for humanity.

Regulations & initiatives are being implemented to address these growing concerns.

Thinking about data control is not something new and solutions have already emerged. But have we really chosen yet what we want?

Indeed, in France, there have been “les lois informatiques et liberté” since January 6th 1978. The objective was to set up a framework after the political scandal around the Safari Project which aimed at making possible to file the population. A country like France, a champion of freedom, could not remain insensitive to such profiling and there was a raising of the opinion. Consequently, it became necessary to establish ethical safeguards against the possibilities offered by data processing.

1. Europe is pioneering the topic

Europe is a forerunner on the privacy debates and the arbitration process between the three sides of the triangle described above: privacy, economic efficiency and state security.

Indeed, in Europe, we have the famous GDPR, which is a legal framework that sets guidelines for the collection and processing of personal information from individuals living in the European Union, and gives those individuals greater control over their own data.

The first effects of the new regulations, and the perils of not complying with them, are already being witnessed. Nevertheless, the actors in charge of enforcing the rules should not only be seen as repressive agents. For example, La “Cnil” (the French regulator) aims to help companies to become compliant with the law in force and this through multiple contributions, such as the launch of a MOOC “RGPD Workshop”.

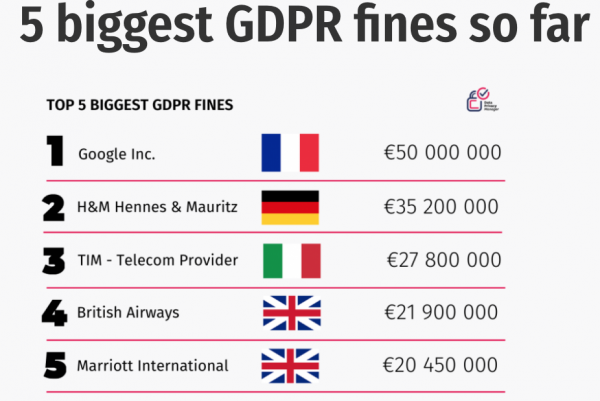

Two levels of GDPR fines:

- The lower level is up to €10 million, or 2% of the worldwide annual revenue from the previous year, whichever is higher.

- The upper level is twice that size or €20 million and 4% of the worldwide annual revenue.

Recently, the European Commission proposed two legislative initiatives: the Digital Services Act (DSA) and the Digital Markets Act (DMA). The objective is to achieve their adoption in early 2022.

The DSA and DMA have two main goals:

- To create a safer digital space in which the fundamental rights of all users of digital services are protected

- To establish a level playing field to foster innovation, growth, and competitiveness, both in the European Single Market and globally

In light of these profound changes as well as the growth of data privacy legislation worldwide, organizations will need to do plenty to prepare to meet the new global landscape over the next years and will bring businesses in line with these measures.

Regulatory balance is a moving target. Since the beginning of the digital age, technological or commercial innovations have tended to anticipate the rules. This is even more true of AI and other recent advances that rely on the computing power of algorithms. Creeping regulation ultimately promotes the creative process. This can be seen as an advantage for Europe, which could build pioneering and therefore leading companies in this global movement.

3. July 2020: Invalidation of the US-Europe privacy Shield

Last summer, the Court of Justice of the European Union (CJEU) annulled the Privacy Shield that guaranteed the free flow of data between the EU and the US on the grounds that personal data transferred to and stored in the US could not be guaranteed a level of data protection as high as that provided for in the GDPR. The decision of the CJEU to invalidate the Privacy Shield makes the United States an inadequate country and therefore has no particular access to personal data flows from Europe. In practice, European companies will need to have CLAs (Standard Contractual Clauses) with each US merchant in order to continue sending data to them.

Companies worry that the investment needed to comply with GDPR will divert resources from other digital initiatives. In addition, companies are questioning whether the stricter European rules will put European companies at a disadvantage in global competition. On the other hand, some see RGPD becoming slowly a new standard worldwide, giving an edge to European companies, which will be designed for this standard.

4. Overview on the world

A slow awakening of the US

The recent Netflix documentary, The Social Dilemma, has highlighted what happens to the wealth of personal information they regularly, and willingly, share online. It may be especially concerning, then, to know that companies in the United States aren’t required by federal law to protect this information. The outcome of the Presidential election may be about to change this, however. It’s widely anticipated that the question of data privacy will be a significant priority for the Biden Administration. The issue has been largely overlooked under President Trump, but with many other countries enforcing GDPR-type regulations, it’s time the US acknowledged the real importance of protecting its citizens’ personal information.

The California Consumer Privacy Act (CCPA) is the United States’ first meaningful foray into modern day consumer data protection.

- CPPA: In the United States, the California Consumer Privacy Act (CCPA) went into effect in the state in January 2020. It gives residents the right to know which data are collected about them and to prevent the sale of their data. CCPA is a broad measure, applying to for-profit organizations that do business in California and meet one of the following criteria:

- Earning more than half of their annual revenues from selling consumers’ personal information

- Earning gross revenues of more than $50 million; or holding personal information on more than 100,000 consumers, households, or devices.

The CCPA is the strictest consumer-privacy regulation in the United States, which yet has no national data-privacy law. A new concept emerged in this regulation, the introduction of the concept of non-personalized advertising, defined as advertising and marketing not based on a consumers’ past behavior.

Other local initiatives had also been put in place: For example, the Massachusetts Institute of Technology (MIT) through the Center for Humane Technology had convinced Apple, Google, and Facebook to adopt, at least in part, the mission of “Time Well Spent” even if it went against their economic interests. The focus was to evolve from a race for “time spent” on screens and apps into a “race to the top” to help people spend time well. For example, with Apple, you have the “Screen time’ features introduced in may 2018. These changes show that companies are willing to make sacrifices, even in the realm of billions of dollars. Nonetheless, things have not yet changed the core logic of these companies. For a company to do something against its economic interest is one thing; doing something against the DNA of its purpose and goals is a different thing altogether.

Other initiatives around the world

Finally, there are a multitude of regulations depending on the country. Canada recently introduced the Digital Charter Implementation Act, India has its Personal Data Protection Bill, Japan its Act on Protection of Personal Information. Brazil has the LGPD (General Data Protection Law) which went into effect in August 2020. The LGPD is an overarching, nationwide law centralizing and codifying rules governing the collection, use, processing, and storage of personal data. While the fines are less steep than the GDPR, they are still important: failing to comply with the LGPD could cost companies up to 2 percent of their Brazilian revenues.

China too released its draft Personal Information Protection Law (PIPL), which just closed its seeking-opinion period on November 19, 2020. What is certain is that data flows are too beneficial to global growth to be heavily impeded by regulation or control unless a very significant cost is accepted especially in the Sino-American conflict.

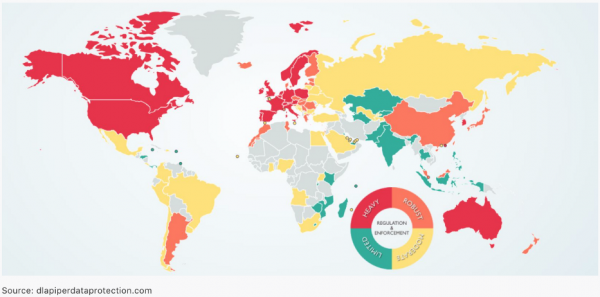

Below a map showing intensity of the laws in force

107 countries (of which 66 were developing or transition economies) have put in place legislation to secure the protection of data and privacy. In this area, Asia and Africa show a similar level of adoption, with less than 40% of countries having a law in place according to a United Nations Conference on Trade and Development.

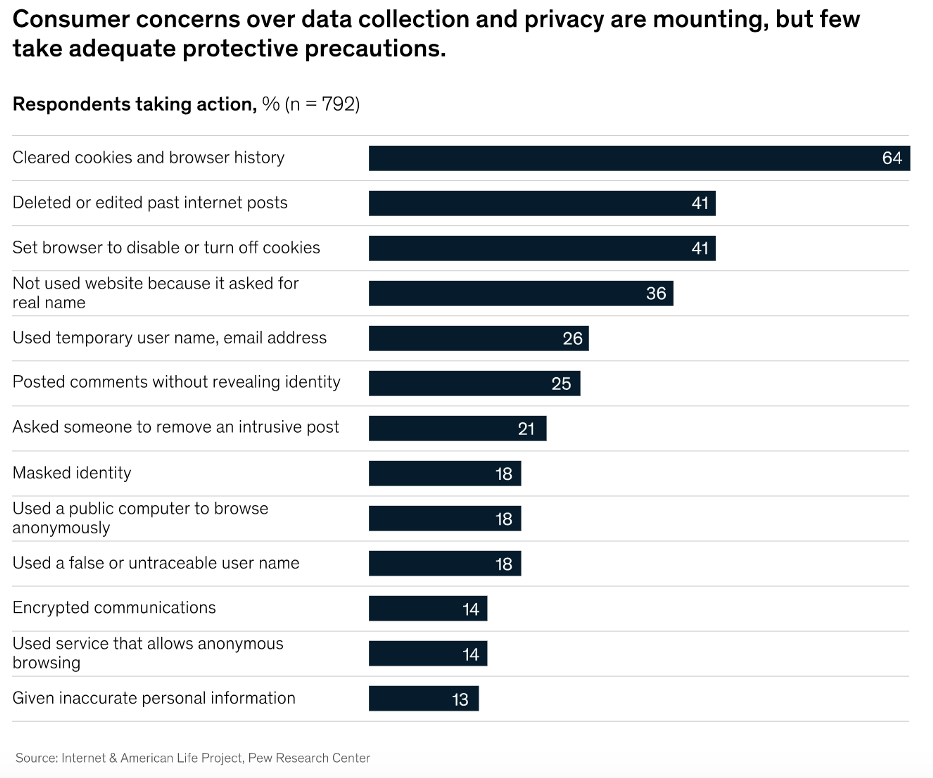

5. However, data privacy implementation is a long journey

It’s rather an evolution made necessary by the industrialization of data collection. And there is still a lot to be done, especially in the area of evangelization, as can be seen from the extremely high cookies acceptance rate (95%). It is set up in a way that minimizes the user’s willingness to block cookies. Next time, think twice before accepting. Another element which underlines this soft start is the market share of browsers: Search Engine respectful of privacy like Brave, Beaker, Qwant, DuckDuckGo, Tenta Browser, Yacy account for less than 1% of browsers installed. Even if individualities emerge: DuckDuckGo increased its average number of daily searches by 62% in 2020 and a few weeks ago, it surpassed 100 million searches in one day for the first time.

The table clearly shows that there is still a lot to be done to sensitize the population as a whole, and not just a tiny part of the educated and techno-friendly population.

Finally, so far, the regulatory stakes have not had the desired effect. Indeed, according to DLA Piper, 59,000 breaches of the RGPD have been reported but only 91 fines have been imposed in the first 8 months of the entry into force of the law.

Therefore, is there an alternative system to properly balance gratuitousness and privacy, regulations and efficiency?

A promising idea: financial compensation

If we simplify, the distinction is based on a financial trade-off: Are you willing to pay vs. free but then targeted content either you are an individual or a company.

1. Simple calculation

Things are definitely moving and this simple calculation will not say the contrary:

French digital marketing market: 5bn€, divided by the number of internet users in France (50m) = 100€ per year per person.

Per month, it costs less than a newspaper subscription. Thus, the consumer by paying a few euros per month would have access to an almost unlimited content while having no advertising … It leaves you wondering…Finally, is it really so logical to share one’s personal data?

Furthermore, Facebook’s advertising revenue per user reached 32$ in 2020. According to the OECD, the average annual wage in the United States was $63,100 in 2018. Therefore, to avoid any advertising, it would be possible for 0.07% of one’s annual salary to do so. When you put it like that, it makes the vast majority of the American classes think about it.

Nevertheless, of course, collection of personal data is not only negative, what is required is to find the right balance between experience and privacy.

The sharing of enterprise data involves similar tradeoffs between privacy and value, and balancing them requires the same level of care and forethought. With the rise of remote working, employee monitoring is blurring the boundary between personal and enterprise data.

2. New usage for new monetization

Businesses use other companies’ enterprise data in the same ways that they use consumers’ personal data: to monitor compliance, understand behaviour, make predictions, and gain insights into customers or competitors. Sharing data can unlock value by improving existing offerings and creating new ones. For example, connected car data is used to create personalized insurance policies based on driver behaviour or to launch whole new mobility-as-a-service models. Similarly, machine sensors is used not only to customize maintenance and improve quality control but also to make new options for equipment use available through pay-per-use leasing models.

To support new uses, new data and application marketplaces and ecosystems are taking shape. A whole industry has arisen to aggregate, process, and sell consumer data in order to better understand behavior, advertising effectiveness, infrastructure utilization, and public-health policy effectiveness, among many other applications. Similarly, IoT and other enterprise data is being aggregated and analyzed for new uses. For example, the maritime Automatic Identification System, originally intended to reduce collisions by tracking the identity and location of ships, is now the source of data for a wide range of other applications which can be expensive to exchange, including economic analysis, insurance, and oceanic research, among others.

This is all potentially good for the economy and for business, but there are challenges to address. Once the data is collected then aggregated, it can become harder to protect the identity of the data source and other sensitive information. And as the data is further shared, it can easily be put to uses that go way beyond what the source of the data originally consented to.

Consent as first censor

It is the first line of defense when it comes to data privacy. But data from connected devices can be collected without much awareness or consent. Permissions are often buried deep in lengthy legal terms and conditions. Unclear consent agreements lead to a disconnect between the data that users think is being collected and how it is being used. In many instances, users may not even be aware that they are valuable use cases. In a 2019 survey by data aggregator Acxiom, more than 80% of respondents said they were concerned about the collection and use of personal data, but 58% were willing to “make tradeoffs on a case-by-case basis as to whether the service or enhancement of service offered is worth the information requested.”

Regulation drives innovation: lots of exciting projects on this promising market

Data privacy offers the opportunity to reconcile citizens with the use of their data by companies, to regain trust in the use of personal data by exchanging with those in agreement. We believe that strong data privacy is a critical enabler for enhanced service offerings and digital commerce. Regulations that reassure consumers that they can trust vendors make for a positive outcome, because they encourage consumers to do more business. According to a Cisco Data Privacy Benchmark study 2020, most organizations are seeing very positive returns on their privacy investments, and more than 40% are seeing benefits at least twice that of their privacy spend. Companies can realize benefits such as competitive advantage or investor appeal from their privacy investments.

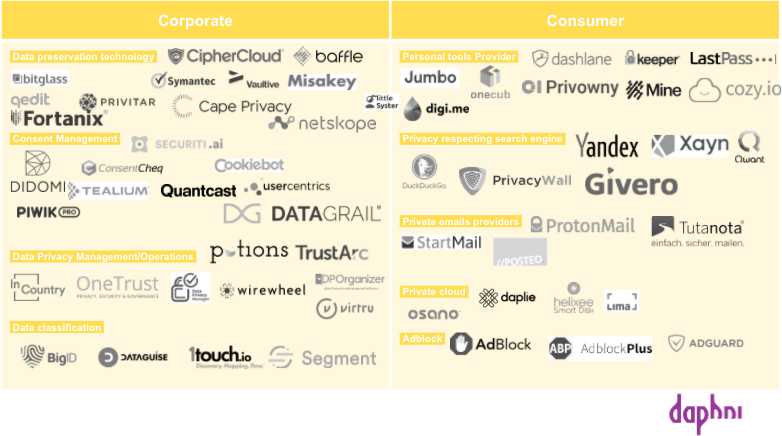

And there is already a lot of softwares at the corporate and individual level to regain control of your data and/or to be compliant. Although the players are better financed on the other side of the Atlantic, Europe is a very serious competitor thanks to its regulation and technological advances.

Below is a mapping of the companies that we believe are “Data Privacy Compliant” and are either business or consumer-oriented.

Corporate

- Data preservation technology: conserving and maintaining both the safety and integrity of data.

- Consent management: companies are exploiting data, and they get a lot out of it. Consent management exists to ensure that the use of data is done with the consent of its users throughout the process of using the data.

- Data privacy operation: achieve and maintain compliance with privacy laws and regulations including replying to consumer requests or data subject requests (DSR/DSAR) and mapping sensitive data.

- Data classification: process of organizing data by relevant categories so that it may be used and protected more efficiently. Data classification is a regulatory requirement, as data must be searchable and retrievable within specified timeframes.

Consumer

- Personal tools providers: tools to foster individuals to regain control over its data.

- Alternative Search engine: ensures neutrality, privacy, and digital freedom while you search for something on the Internet.

- Private cloud: Encrypting sensitive data before it goes to the cloud with the enterprise

- Adblock

- Email providers

According to Salesforce.com’s 2019 “State of the Connected Customer” survey, 72% of consumers would stop buying a company’s products or using its services out of privacy concerns. Businesses now have no choice but to “reinvent” themselves when it comes to data privacy. It should be seen as an opportunity, not a sanction. Europe has the cards to drive innovation in data privacy, and to be the driving force behind the new challenges that respond to the natural evolution expected by users.

Conclusion

President Macron’s words during his discussion with Niklas Zennström (Founder of Atomico and Skype) still resonate with us: “The United States has Gafam, China has BATX, and Europe has RGPD”. This RGPD means numerous business opportunities, starting with those in the Data Privacy sector, which we believe will be dominated by European companies. Finally President Macron added that “If we do not take care of the Tech, we will have to live with its negative externalities. Tech must make its contribution to climate change, to inequality of opportunity, and with respect for our democracies and our citizens.” At daphni, this is the Tech we wish to finance. All the ambitious projects we receive strengthen our conviction that France and Europe have the capacity to bring out these champions of tomorrow, champions in the image of the world we want to pass on to our children.

If you’re an expert or founder in the data privacy landscape and you’d like to get in touch, please email us at [email protected], [email protected] or [email protected].

A HUGE thanks to Yann de Cheffontaines for his work on this report! And a special thanks to Vincent Gauche from Potions, Tangi Gouez from Dashlane, Romain Gauthier from Didomi, Arthur Blanchon from Misakey, Sebastien Parent from Prolicent, Jonathan Rouach from Qedit, Matthieu Daguenet from Little syster, and Salomé Fofana.

Bibliography

→ Jim Balsillie, « Data Is Not the New Oil — It’s the New Plutonium », Financial Post, 28 mai 2019,

→ Eric Schmidt et Jared Cohen, The New Digital Age Reshaping the Future of People, Nations and Business, London Murray, 2014, p. 9–10.

https://www.dsxhub.org/how-to-reduce-the-impact-of-data-centers-on-the-environment/